Algorithms and Insurrection

By Trey Poche | January 2021

Compelling stories of security failures and racial bias will likely emerge when journalists better understand the storming of the Capitol on Jan. 6. But did social media algorithms also play a role?

Facebook’s own research suggests so. Two-thirds of people who joined extremist groups on Facebook, did so because they were referred to the groups by mysterious algorithmic match makings.

Before the insurrection, with COVID quarantines keeping people at home in front of televisions, The Social Dilemma documentary caught much of the nation’s attention with its scathing criticisms of Facebook, Twitter and other social media giants. It was so influential that Facebook, often attacked in the film, responded by saying the film “buries the substance in sensationalism.”

Still, the point was inescapable to a growing number of users who are beginning to understand how they are susceptible to the algorithmic pump of misinformation while on social media, and how social media literacy may be able to remedy the problem.

Predictive algorithms are not necessarily bad. They serve us what they think we like, whether we want to watch more cat videos or sports highlights, and they are often helpful.

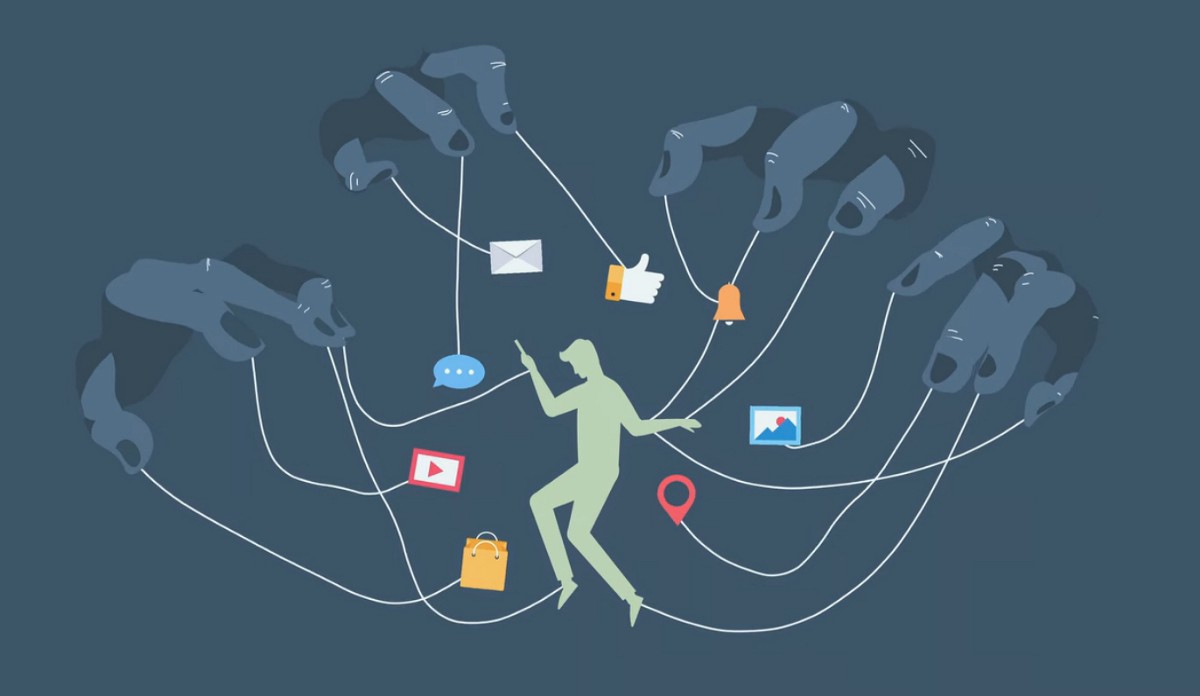

However, algorithms are more frightening when we consider that all of our online actions -- Facebook “likes” and time spent on different pages -- are analyzed and fed back into the algorithm to make it smarter at predicting what we want, sometimes before we know what we want.

From the perspective of social media companies, a trigger is any kind of content that will keep a user engaged online. Social media companies have long had “slot-machine-like” scrolling features and photo-tagging, all designed to keep users online and ads in front of them, but now algorithms are so exact they can exploit weaknesses in human psychology.

How then does the algorithm do this? How can an algorithm present a piece of information to us that ends up overwhelming our human weaknesses or biases?

Algorithms process so much information about individuals that they can then leverage this demographic and internet behavior data to predict what will best trigger each user. In the hyper-partisan, polarized American political climate of today, political content is particularly effective in triggering users to stay online.

Whether you lean right or left, you are susceptible to political triggers on social media. Just as the algorithm is capable of serving you more and more cute cat videos, books or shoes, it’s just as capable of serving you an endless stream of content in line with your existing worldview. But this does not mean that this political information is true or made by good-faith actors.

One of the best ways to trigger someone is to present them with false information or heavily-biased information that is compatible with their worldview. The first time someone is faced with false information they may be skeptical, but what happens when the algorithm keeps serving up similar but equally suspect information? Individuals’ world views slowly start to line up with the misinformation.

When an algorithm tailors different “news” for each user; everyone starts to live in their own information reality. And with social media, this is happening on the scale of millions of users. Millions of people are being sucked into false information realities—conspiracy theories.

The conspiracy theory problem doesn’t remain in the confines of social media; conspiracy theories have real consequences for society. When a person living in their distinct information reality eventually comes into contact with a different point-of-view, they will spend more time denigrating and arguing against that viewpoint. When we encounter these people online or in the real world, we can’t relate to them. Arguments get heated and friendships are broken.

Far beyond the scope of individuals, conspiracy theories pumped by algorithms make their way into the public debate. Politicians become aware of them and play into them to raise their popular support, even using them to implement policy.

The Social Dilemma skillfully lays bare algorithmic manipulation for average Netflix viewers. As more people began to recognize the problems logged in The Social Dilemma, public opinion is mounting against social media firms; eight in ten Americans say they do not trust Big Tech to make content decisions, according to Reuters.

While the immediate causes of the Jan.6 insurrection are important to uncover, how many people would have marched to the Capitol if social media algorithms had never targeted them with conspiracy-laden, extremist content in the first place?